Synthetic monitoring in CI/CD has become a key practice for modern DevOps teams that want to deploy fast without sacrificing stability. Today, pipelines automate build, testing, and deployment, but many critical failures still slip through because validation focuses on isolated code and technical checks, rather than on the functional behavior of the system in a real or simulated environment before and after each release.

This is where synthetic monitoring fits naturally. It is not about measuring real user behavior (that is the role of RUM), but about validating the real behavior of the system by automatically simulating users, flows, and critical calls. This article explains how to integrate synthetic monitoring into CI/CD, what to validate at each stage, and how to prevent a deployment from breaking production.

CI/CD pipelines have dramatically improved delivery speed, but they have also introduced a new risk: releasing changes faster than we are able to validate them under real conditions.

Unit and integration tests are necessary, but not sufficient. Many issues only appear when the system:

- Executes complete end-to-end flows

- Communicates with real APIs

- Interacts with databases

- Depends on external services

- Operates under real configurations

A common example is database connection errors or connection pool exhaustion under increased simulated load—issues that unit tests typically ignore.

Synthetic monitoring in CI/CD helps close this gap by validating that critical system flows continue to work correctly before and after every deployment.

Synthetic monitoring consists of running active tests that simulate real interactions with the system: API calls, flow executions, response validations, and performance measurements.

Unlike traditional tests, it:

- Does not validate only isolated functions

- Validates the system as a whole

- Can run continuously

- Detects operational issues, not just code errors

This makes it an ideal complement to CI/CD, where the goal is not only to ensure that code compiles, but that the system works correctly after every change.

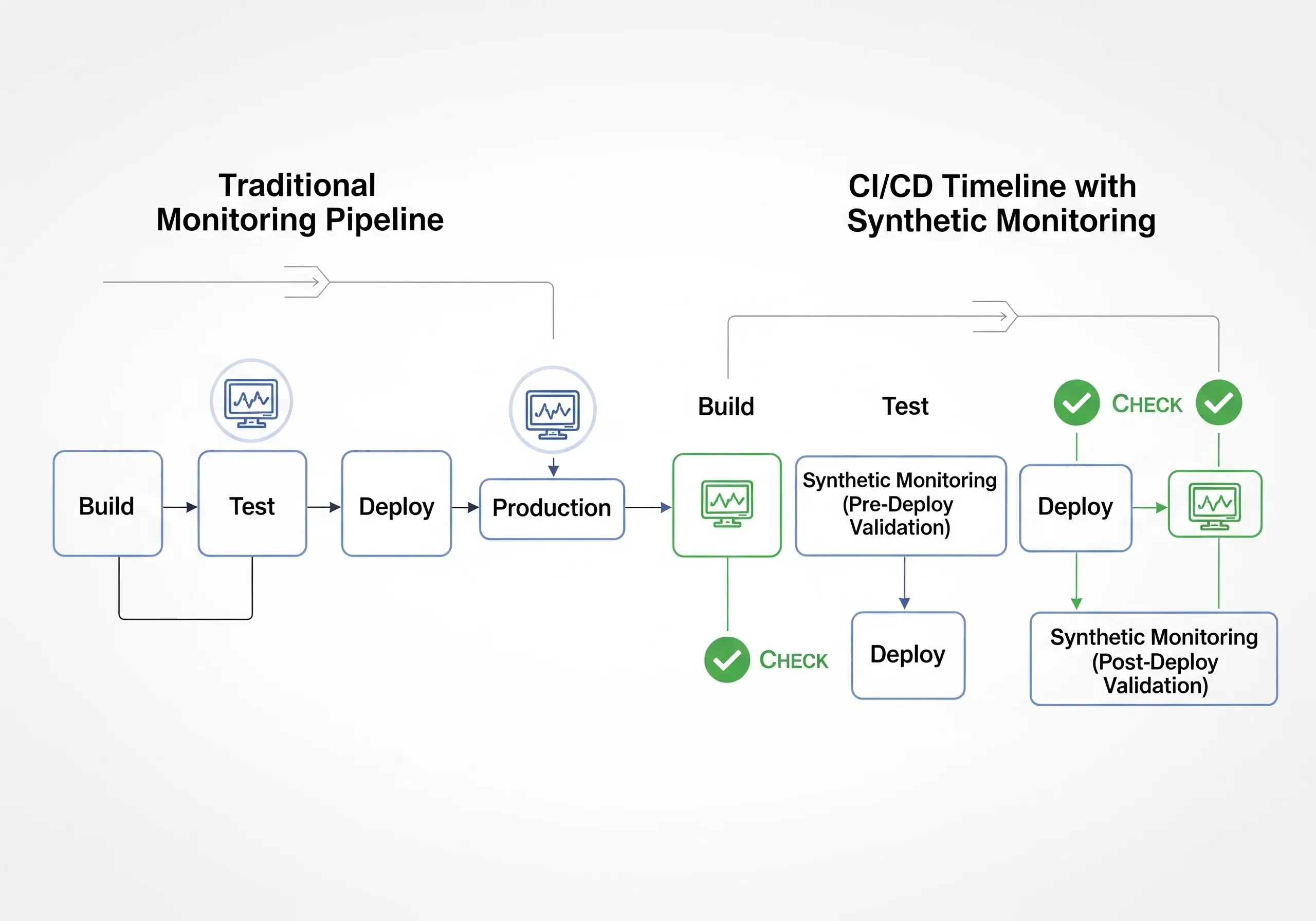

Integrating synthetic monitoring into CI/CD means introducing automated validations at key points in the pipeline:

- Before deployment (pre-deploy)

- Immediately after deployment (post-deploy)

- Continuously in production

Each of these moments serves a distinct and critical purpose.

Before deploying a change, synthetic monitoring can validate that essential flows continue to work in staging or pre-production environments.

Simulating a login helps detect issues with credentials, tokens, sessions, or identity integrations before they reach production.

In e-commerce, this includes cart and checkout. In SaaS, it includes creation, editing, or usage flows of core features.

Synthetic monitoring can execute calls to internal and external APIs, validating response times, status codes, and payloads.

Small changes can break complete flows. Synthetic tests detect these regressions before the release.

Integrating these pre-deploy validations drastically reduces the risk of releasing critical errors.

Even if everything works in staging, production reality can be different. Configuration differences, real data, or external dependencies can introduce failures.

Post-deploy synthetic monitoring allows teams to:

- Execute complete flows immediately after the release

- Confirm that critical paths remain operational

- Detect issues that only appear in production

- Validate performance against SLO/SLI thresholds

This confirms that critical latency (for example, the 95th percentile) remains within the defined objective and acts as an immediate performance regression check.

These validations serve as an automated safety net after every deployment.

It is important to clarify a key point. Synthetic monitoring does not measure real user behavior; that is the role of RUM tools, which analyze real sessions, devices, and browsers.

Synthetic monitoring validates the real behavior of the system by simulating users and flows in a controlled and repeatable way. This distinction is crucial in CI/CD, where consistency and automation are required—not real-world user variability.

One of the most powerful uses of synthetic monitoring in CI/CD is as a quality gate.

A quality gate is an automatic condition that determines whether a pipeline can continue or must stop. For example:

- If the login flow fails, do not deploy

- If checkout does not complete correctly, roll back

- If an API exceeds a response time threshold, block the release

Synthetic monitoring allows teams to define these gates based on real functionality, not just isolated technical metrics.

When a quality gate fails, it should integrate with orchestration tools such as Spinnaker or ArgoCD to trigger an automatic rollback or pause a canary release, minimizing exposure time to failure (MTTR).

Integrating synthetic monitoring into CI/CD does not require manual processes. Everything can be automated.

Synthetic tests can be triggered via API calls from the pipeline, integrating with GitHub Actions, GitLab CI, Jenkins, or CircleCI.

Pipelines can wait for the result of a synthetic test and decide whether to proceed or fail.

More exhaustive tests can be executed only for certain releases or critical branches.

Results can be stored and compared across releases to detect progressive degradation.

This turns synthetic monitoring into a native part of the pipeline, not an external step.

A frontend change altered an HTML selector. Unit tests passed, but the checkout flow stopped working. Synthetic monitoring in CI/CD detected the failure and blocked the deployment.

A dependency changed its response format. Synthetic monitoring detected the issue in staging and prevented it from reaching production.

A release introduced a more expensive database query. Post-deploy synthetic tests detected the latency increase before users noticed.

These scenarios show how synthetic monitoring in CI/CD prevents real incidents, not just theoretical errors.

UptimeBolt allows teams to integrate synthetic monitoring directly into CI/CD pipelines through simple, automatable API calls.

With UptimeBolt, you can:

- Define synthetic flows for login, checkout, APIs, and critical processes

- Run them automatically before and after every deployment

- Get clear, actionable results

- Use results as quality gates

- Detect anomalies and early degradations

- Correlate results with other monitors (HTTPS, APIs, databases, etc.) to deliver fast, centralized root cause analysis (RCA) in a single dashboard

This ensures that every release is validated not only at the code level, but at the level of real system behavior.

If you want to integrate synthetic monitoring into your CI/CD and prevent deployments from breaking production, sign up and get a free trial.

Modern pipelines automate deployment, but without synthetic validations, critical blind spots remain. Synthetic monitoring in CI/CD enables teams to validate real flows, detect regressions, enforce quality gates, and drastically reduce operational risk.

Today, deploying fast is no longer enough. What truly matters is deploying with confidence. Integrating synthetic monitoring into CI/CD is one of the most effective steps to achieve this and to build more stable, resilient, and scalable systems.

Synthetic monitoring in CI/CD has become a key practice for modern DevOps teams that want to deploy fast without sacrificing stability. Today, pipelines automate build, testing, and deployment, but many critical failures still slip through because validation focuses on isolated code and technical checks, rather than on the functional behavior of the system in a real or simulated environment before and after each release.

This is where synthetic monitoring fits naturally. It is not about measuring real user behavior (that is the role of RUM), but about validating the real behavior of the system by automatically simulating users, flows, and critical calls. This article explains how to integrate synthetic monitoring into CI/CD, what to validate at each stage, and how to prevent a deployment from breaking production.

Introduction: why CI/CD needs modern synthetic validations

CI/CD pipelines have dramatically improved delivery speed, but they have also introduced a new risk: releasing changes faster than we are able to validate them under real conditions.

Unit and integration tests are necessary, but not sufficient. Many issues only appear when the system:

A common example is database connection errors or connection pool exhaustion under increased simulated load—issues that unit tests typically ignore.

Synthetic monitoring in CI/CD helps close this gap by validating that critical system flows continue to work correctly before and after every deployment.

What is synthetic monitoring and why it fits CI/CD

Synthetic monitoring consists of running active tests that simulate real interactions with the system: API calls, flow executions, response validations, and performance measurements.

Unlike traditional tests, it:

This makes it an ideal complement to CI/CD, where the goal is not only to ensure that code compiles, but that the system works correctly after every change.

The role of synthetic monitoring within the pipeline

Integrating synthetic monitoring into CI/CD means introducing automated validations at key points in the pipeline:

Each of these moments serves a distinct and critical purpose.

Pre-deploy validations: ensuring the release is not broken from the start

Before deploying a change, synthetic monitoring can validate that essential flows continue to work in staging or pre-production environments.

Login and authentication validation

Simulating a login helps detect issues with credentials, tokens, sessions, or identity integrations before they reach production.

Critical flow validation

In e-commerce, this includes cart and checkout. In SaaS, it includes creation, editing, or usage flows of core features.

Critical API tests

Synthetic monitoring can execute calls to internal and external APIs, validating response times, status codes, and payloads.

Early regression detection

Small changes can break complete flows. Synthetic tests detect these regressions before the release.

Integrating these pre-deploy validations drastically reduces the risk of releasing critical errors.

Post-deploy validations: confirming the system works under real conditions

Even if everything works in staging, production reality can be different. Configuration differences, real data, or external dependencies can introduce failures.

Post-deploy synthetic monitoring allows teams to:

This confirms that critical latency (for example, the 95th percentile) remains within the defined objective and acts as an immediate performance regression check.

These validations serve as an automated safety net after every deployment.

On the concept of “real behavior” in CI/CD

It is important to clarify a key point. Synthetic monitoring does not measure real user behavior; that is the role of RUM tools, which analyze real sessions, devices, and browsers.

Synthetic monitoring validates the real behavior of the system by simulating users and flows in a controlled and repeatable way. This distinction is crucial in CI/CD, where consistency and automation are required—not real-world user variability.

Quality gates: preventing deployments from breaking production

One of the most powerful uses of synthetic monitoring in CI/CD is as a quality gate.

A quality gate is an automatic condition that determines whether a pipeline can continue or must stop. For example:

Synthetic monitoring allows teams to define these gates based on real functionality, not just isolated technical metrics.

When a quality gate fails, it should integrate with orchestration tools such as Spinnaker or ArgoCD to trigger an automatic rollback or pause a canary release, minimizing exposure time to failure (MTTR).

How to automate synthetic monitoring within CI/CD pipelines

Integrating synthetic monitoring into CI/CD does not require manual processes. Everything can be automated.

API-based execution

Synthetic tests can be triggered via API calls from the pipeline, integrating with GitHub Actions, GitLab CI, Jenkins, or CircleCI.

Validation scripts

Pipelines can wait for the result of a synthetic test and decide whether to proceed or fail.

Conditional execution

More exhaustive tests can be executed only for certain releases or critical branches.

Automated reporting

Results can be stored and compared across releases to detect progressive degradation.

This turns synthetic monitoring into a native part of the pipeline, not an external step.

Real-world examples: preventing failures introduced by releases

Small change that breaks a complete flow

A frontend change altered an HTML selector. Unit tests passed, but the checkout flow stopped working. Synthetic monitoring in CI/CD detected the failure and blocked the deployment.

External API update

A dependency changed its response format. Synthetic monitoring detected the issue in staging and prevented it from reaching production.

Post-release performance degradation

A release introduced a more expensive database query. Post-deploy synthetic tests detected the latency increase before users noticed.

These scenarios show how synthetic monitoring in CI/CD prevents real incidents, not just theoretical errors.

How UptimeBolt runs synthetic tests on every release

UptimeBolt allows teams to integrate synthetic monitoring directly into CI/CD pipelines through simple, automatable API calls.

With UptimeBolt, you can:

This ensures that every release is validated not only at the code level, but at the level of real system behavior.

If you want to integrate synthetic monitoring into your CI/CD and prevent deployments from breaking production, sign up and get a free trial.

Conclusion: CI/CD without synthetic monitoring is deploying blindly

Modern pipelines automate deployment, but without synthetic validations, critical blind spots remain. Synthetic monitoring in CI/CD enables teams to validate real flows, detect regressions, enforce quality gates, and drastically reduce operational risk.

Today, deploying fast is no longer enough. What truly matters is deploying with confidence. Integrating synthetic monitoring into CI/CD is one of the most effective steps to achieve this and to build more stable, resilient, and scalable systems.